This is a div block with a Webflow interaction that will be triggered when the heading is in the view.

Modernize your cloud. Maximize business impact.

In the rapidly evolving cloud computing landscape, AWS Step Functions has emerged as a cornerstone for developers looking to orchestrate complex, distributed applications seamlessly in serverless implementations. The recent expansion of AWS SDK integrations marks a significant milestone, introducing support for 33 additional AWS services, including cutting-edge tools like Amazon Q, AWS B2B Data Interchange, AWS Bedrock, Amazon Neptune, and Amazon CloudFront KeyValueStore, etc. This enhancement not only broadens the horizon for application development but also opens new avenues for serverless data processing.

Serverless computing has revolutionized the way we build and scale applications, offering a way to execute code in response to events without the need to manage the underlying infrastructure. With the latest updates to AWS Step Functions, developers now have at their disposal a more extensive toolkit for creating serverless workflows that are not only scalable but also cost-efficient and less prone to errors.

In this blog, we will delve into the benefits and practical applications of these new integrations, with a special focus on serverless data processing. Whether you're managing massive datasets, streamlining business processes, or building real-time analytics solutions, the enhanced capabilities of AWS Step Functions can help you achieve more with less code. By leveraging these integrations, you can create workflows that directly invoke over 11,000+ API actions from more than 220 AWS services, simplifying the architecture and accelerating development cycles.

Practical Applications in Data Processing:

This AWS SDK integration with 33 new services not only broadens the scope of potential applications within the AWS ecosystem but also streamlines the execution of a wide range of data processing tasks. These integrations empower businesses with automated AI-driven data processing, streamlined EDI document handling, and enhanced content delivery performance.

Amazon Q Integration: Amazon Q is a generative AI-powered enterprise chat assistant designed to enhance employee productivity in various business operations. The integration of Amazon Q with AWS Step Functions enhances workflow automation by leveraging AI-driven data processing. This integration allows for efficient knowledge discovery, summarization, and content generation across various business operations. It enables quick and intuitive data analysis and visualization, particularly beneficial for business intelligence. In customer service, it provides real-time, data-driven solutions, improving efficiency and accuracy. It also offers insightful responses to complex queries, facilitating data-informed decision-making.

AWS B2B Data Interchange: Integrating AWS B2B Data Interchange with AWS Step Functions streamlines and automates electronic data interchange (EDI) document processing in business workflows. This integration allows for efficient handling of transactions including order fulfillment and claims processing. The low-code approach simplifies EDI onboarding, enabling businesses to utilize processed data in applications and analytics quickly. This results in improved management of trading partner relationships and real-time integration with data lakes, enhancing data accessibility for analysis. The detailed logging feature aids in error detection and provides valuable transaction insights, essential for managing business disruptions and risks.

Amazon CloudFront KeyValueStore: This integration enhances content delivery networks by providing fast, reliable access to data across global networks. It's particularly beneficial for businesses that require quick access to large volumes of data distributed worldwide, ensuring that the data is always available where and when it's needed.

Neptune Data: This integration allows the Processing of graph data in a serverless environment, ideal for applications that require complex relationships and data patterns like social networks, recommendation engines, and knowledge graphs. For instance, Step Functions can orchestrate a series of tasks that ingest data into Neptune, execute graph queries, analyze the results, and then trigger other services based on those results, such as updating a dashboard or triggering alerts.

Amazon Timestream Query & Write: The integration is useful in serverless architectures for analyzing high-volume time-series data in real-time, such as sensor data, application logs, and financial transactions. Step Functions can manage the flow of data from ingestion (using Timestream Write) to analysis (using Timestream Query), including data transformation, anomaly detection, and triggering actions based on analytical insights.

Amazon Bedrock & Bedrock Runtime: AWS Step Functions can orchestrate complex data streaming and processing pipelines that ingest data in real-time, perform transformations, and route data to various analytics tools or storage systems. Step Functions can manage the flow of data across different Bedrock tasks, handling error retries, and parallel processing efficiently

AWS Elemental MediaPackage V2: Step Functions can orchestrate video processing workflows that package, encrypt, and deliver video content, including invoking MediaPackage V2 actions to prepare video streams, monitoring encoding jobs, and updating databases or notification systems upon completion.

AWS Data Exports: With Step Functions, you can sequence tasks such as triggering data export actions, monitoring their progress, and executing subsequent data processing or notification steps upon completion. It can automate data export workflows that aggregate data from various sources, transform it, and then export it to a data lake or warehouse.

Benefits of the New Integrations

The recent integrations within AWS Step Functions bring forth a multitude of benefits that collectively enhance the efficiency, scalability, and reliability of data processing and workflow management systems. These advancements simplify the architectural complexity, reduce the necessity for custom code, and ensure cost efficiency, thereby addressing some of the most pressing challenges in modern data processing practices. Here's a summary of the key benefits:

Simplified Architecture: The new service integrations streamline the architecture of data processing systems, reducing the need for complex orchestration and manual intervention.

Reduced Code Requirement: With a broader range of integrations, less custom code is needed, facilitating faster deployment, lower development costs, and reduced error rates.

Cost Efficiency: By optimizing workflows and reducing the need for additional resources or complex infrastructure, these integrations can lead to significant cost savings.

Enhanced Scalability: The integrations allow systems to easily scale, accommodating increasing data loads and complex processing requirements without the need for extensive reconfiguration.

Improved Data Management: These integrations offer better control and management of data flows, enabling more efficient data processing, storage, and retrieval.

Increased Flexibility: With a wide range of services now integrated with AWS Step Functions, businesses have more options to tailor their workflows to specific needs, increasing overall system flexibility.

Faster Time-to-Insight: The streamlined processes enabled by these integrations allow for quicker data processing, leading to faster time-to-insight and decision-making.

Enhanced Security and Compliance: Integrating with AWS services ensures adherence to high security and compliance standards, which is essential for sensitive data processing and regulatory requirements.

Easier Integration with Existing Systems: These new integrations make it simpler to connect AWS Step Functions with existing systems and services, allowing for smoother digital transformation initiatives.

Global Reach: Services like Amazon CloudFront KeyValueStore enhance global data accessibility, ensuring high performance across geographical locations.

As businesses continue to navigate the challenges of digital transformation, these new AWS Step Functions integrations offer powerful solutions to streamline operations, enhance data processing capabilities, and drive innovation. At Cloudtech, we specialize in serverless data processing and event-driven architectures. Contact us today and ask how you can realize the benefits of these new AWS Step Functions integrations in your data architecture.

Whether you're managing massive datasets, streamlining business processes, or building real-time analytics solutions, the

Related Resources

Small and medium-sized businesses (SMBs) that moved to the cloud have reduced their total cost of ownership (TCO) by up to 40%. Before migrating, they struggled with high infrastructure costs, unreliable systems, limited IT resources, and difficulty scaling or switching vendors.

By moving to AWS, businesses not only cut costs but also gain the ability to launch faster, scale on demand, and build customer-centric features. This has been more efficient than the legacy-bound peers for SMBs. Services like Amazon EC2, Amazon RDS, AWS Fargate, Amazon CloudWatch, and AWS Backup enabled them to drive agility and resilience.

Today, some of the most competitive businesses are working with AWS partners to migrate their core workloads. This article covers the benefits of AWS cloud migration and how AWS partners can help SMBs maximize their return on investment and position themselves for long-term success.

Key takeaways:

- Migration is a modernization opportunity: Moving to AWS helps SMBs replace outdated infrastructure with scalable, secure, and cost-effective cloud environments that support long-term growth.

- Strategic execution maximizes benefits: Certified partners align each migration phase with business goals, ensuring the right AWS services are used, risks are minimized, and long-term value is realized.

- Data-driven planning ensures smarter outcomes: Tools like AWS Migration Evaluator reveal infrastructure gaps, compliance risks, and cost inefficiencies, enabling the development of an informed migration strategy.

- Security and scalability are built from the start: Architectures include multi-AZ deployments, automated backups, IAM controls, and real-time monitoring to ensure business continuity.

- Cloudtech simplifies the path to innovation: Along with cloud migration and modernization, Cloudtech helps SMBs adopt AI, improve governance, and continuously optimize for performance and cost.

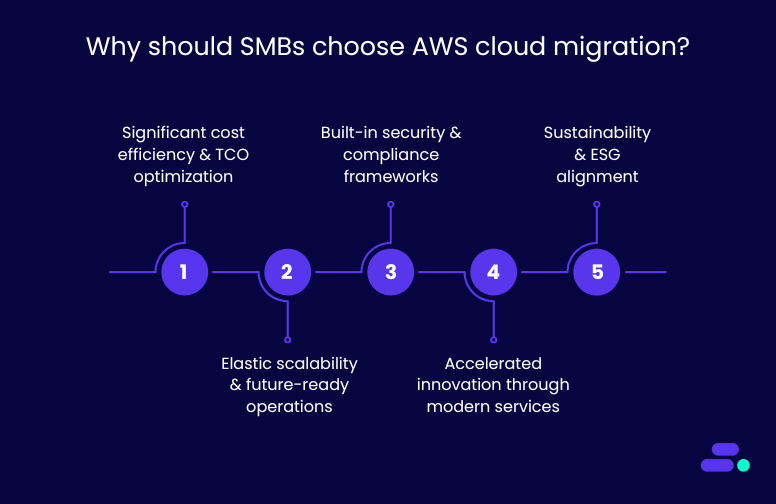

Why should SMBs choose AWS cloud migration?

Among the available options, AWS Cloud migration is a top choice for SMBs. It offers the depth, flexibility, and reliability needed to modernize with confidence. AWS provides over 200 services across compute, storage, analytics, AI/ML, and IoT. This makes it the most comprehensive cloud platform on the market.

Its global infrastructure includes the highest number of availability zones and regions. This ensures low-latency performance and high availability for users worldwide. AWS also has a large partner network, including Advanced Tier partners like Cloudtech, who guide SMBs through tailored migration plans.

With flexible pricing, built-in cost optimization tools, and strong third-party integrations, AWS is more adaptable than many rigid, bundled alternatives.

Here are some of the key reasons why businesses need AWS Cloud migration:

1. Significant cost efficiency and TCO optimization

Migrating to the AWS Cloud helps SMBs cut capital expenses tied to owning and maintaining on-premise hardware. It reduces costs related to servers, storage, power, cooling, security, and ongoing maintenance. Instead, businesses pay only for the resources they actually use.

AWS offers granular pricing options like reserved instances, savings plans, and auto-scaling. These help SMBs manage costs more effectively. Cloud migration also frees up IT teams from routine maintenance, so they can focus on innovation, product development, and improving customer experiences.

Take the example of a global IT staffing firm struggling with high Elastic licensing fees, frequent downtime, and a self-managed Elastic (ELK) stack that demanded eight engineers. With the help of Cloudtech, an advanced tier AWS partner, it migrated the log analytics to a managed architecture using Amazon OpenSearch Service, AWS Fagate, Amazon EKS, and Amazon ECR. This eliminated their maintenance overhead and improved reliability.

The result: 40% lower costs, 80% less downtime, and real-time insights that now power faster, data-driven business decisions.

2. Elastic scalability and future-ready operations

AWS allows SMBs to scale resources instantly based on demand, whether it’s a traffic spike, seasonal peak, or business growth. This flexibility keeps operations efficient and avoids unnecessary overhead.

Built-in tools like infrastructure-as-code, automated monitoring, and centralized dashboards give teams better control and visibility. They can track performance, spot issues early, and adjust resources in real time.

Migration also sets the stage for long-term modernization. SMBs can adopt containerization, DevOps, and AI-driven automation to stay competitive.

3. Built-in security and compliance frameworks

AWS invests billions in securing its infrastructure, offering SMBs access to enterprise-grade security capabilities, including:

- Encryption at rest and in transit

- Multi-Factor Authentication (MFA)

- Identity and Access Management (IAM)

- Automated threat detection via Amazon GuardDuty

Beyond these, AWS supports over 90 security and compliance standards globally (e.g., GDPR, ISO 27001, HIPAA), allowing SMBs in regulated industries to meet requirements without building capabilities from scratch.

AWS Security Hub centralizes findings across Amazon GuardDuty, IAM, and AWS Config, making it easier for lean SMB teams to maintain a secure posture without managing dozens of tools. Alerts are prioritized by severity, and GuardDuty detects threats like suspicious IP access, brute-force attempts, or exposed ports

Importantly, AWS operates on a shared responsibility model. While AWS secures the infrastructure, businesses maintain control over how they configure and protect their applications and data.

4. Accelerated innovation through modern services

AWS enables faster go-to-market and experimentation with modern services such as:

- Amazon Aurora for fully managed, scalable databases

- Amazon SageMaker for ML-based insights

- AWS Lambda for serverless computing

- Amazon QuickSight and Q Business for embedded analytics

For example, with Amazon Aurora, updates, failovers, and backups are handled automatically — no DBA needed. With Amazon SageMaker, you don’t need a dedicated ML team. Pre-built models and low-code tools let your developers build and deploy predictions using real business data.

These services empower SMBs to innovate with minimal upfront investment, enabling agile development cycles, real-time analytics, and intelligent automation.

5. Sustainability and ESG alignment

AWS’s energy-efficient, globally optimized data centers help SMBs cut operational costs and reduce their carbon footprint. With advanced cooling, efficient server use, and smart workload distribution, AWS consumes significantly less energy than traditional on-premise setups.

AWS achieves higher efficiency through advanced power utilization, custom hardware design, and global workload orchestration. For example, by shifting analytics workloads to regions powered by renewable energy, SMBs can directly reduce their compute-related emissions without changing their application code.

For SMBs aiming to meet ESG goals, migrating to AWS offers a clear path to sustainability without added complexity. AWS is on track to use 100% renewable energy by 2025, with many regions already powered by clean energy.

What You Get With AWS Cloud Migration

- Built-in automation (patching, failovers, scaling)

- Real-time visibility with Amazon CloudWatch and AWS Cost Explorer

- Security without headcount (MFA, AWS GuardDuty, AWS Security Hub)

- Smarter cost control (Savings Plans + tagging setup)

- Modern services, minus steep learning curves (Amazon SageMaker, Amazon Aurora)

- Cleaner ESG profile without re-architecting apps

However, implementing AWS Cloud migration is no easy task. It requires careful planning and strategic guidance for achieving the best results.

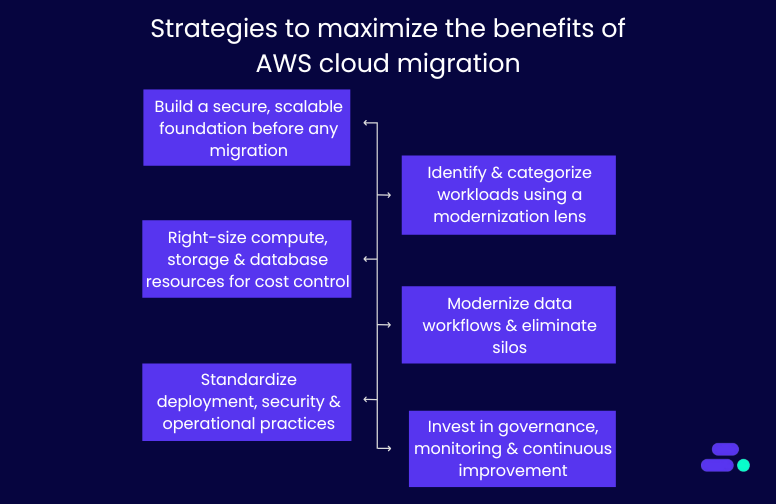

Strategies to maximize the benefits of AWS Cloud migration

Many SMBs still operate on aging on-prem servers, custom-built tools, and fragmented data systems. These environments are costly to maintain, difficult to scale, and limit innovation. Cloud migration offers a chance to rethink how IT drives growth and efficiency.

However, attempting this transition without expert support can lead to misconfigurations, downtime, or runaway costs. AWS partners bring deep technical expertise, proven frameworks, and real-world experience to guide SMBs through every step. They help ensure migrations are not only secure and smooth but also aligned with long-term business strategy.

Let’s break down five core strategies AWS partners follow to help SMBs migrate to the cloud and prepare them to lead in their markets, not just survive:

1. Build a secure, scalable foundation before any migration

Unstructured cloud adoption often leads to fragmented environments, inconsistent access controls, and long-term governance issues. That’s why experienced AWS partners begin by setting up a foundational landing zone using AWS Control Tower and multi-account architecture.

Key technical components include:

- Account segmentation: Workloads are isolated into separate accounts (e.g., dev, staging, production) using AWS Organizations, improving security and cost tracking.

- Network design: Virtual private clouds (VPCs) are built across multiple availability zones for fault tolerance and high availability.

- Security baselines: Partners enforce least-privilege IAM policies, default encryption (via AWS KMS), and logging using AWS CloudTrail.

- Automated guardrails: Tools like AWS Config and service control policies (SCPs) ensure compliance and prevent misconfigurations.

This upfront setup prevents issues down the line and ensures your cloud environment scales without exposing security or operational risks.

2. Identify and categorize workloads using a modernization lens

Not every workload should be treated the same. SMBs often have legacy ERP systems, aging virtual machines, or custom scripts that are no longer efficient or scalable. AWS partners use various evaluation tools to profile and categorize each workload.

The strategy is to assess current infrastructure across dimensions, including resource usage (CPU, memory, disk I/O), software stack (OS, dependencies, licenses), system interdependencies, and compliance needs (HIPAA, PCI, GDPR). It also accounts for existing costs, including hardware, facilities, and support.

This analysis shapes a tailored cloud migration strategy using the 7 Rs framework:

- Rehost (lift-and-shift): Move applications as-is from on-premises to AWS without major changes. For example, a healthcare provider moves its legacy appointment scheduling software from local servers to Amazon EC2. No code changes are made, but the system now benefits from cloud uptime and centralized management.

- Replatform (lift-tinker-and-shift): Make minimal changes to optimize the app for cloud, often switching databases or OS-level services. For example, an SMB in financial services moves from an on-prem Oracle database to Amazon RDS for PostgreSQL, reducing licensing costs while maintaining similar functionality and improving automated backups and patching.

- Refactor (re-architect): Redesign the application to take full advantage of cloud-native features like microservices, containers, or serverless. For example, a patient intake form system is rebuilt using AWS Lambda, Amazon API Gateway, and Amazon DynamoDB, enabling the healthcare company to scale intake automatically without paying for idle resources.

- Repurchase: Switch from a legacy, self-managed system to a SaaS or AWS Marketplace alternative. For example, a retail business retires its in-house CRM and adopts Salesforce or Zendesk hosted on AWS to modernize customer support and reduce infrastructure maintenance.

- Retire: Shut down systems or services that are no longer useful. For example, during migration discovery, an SMB identifies two reporting tools that are no longer used. These are retired, reducing licensing fees and operational overhead.

- Retain: Keep certain applications or workloads on-prem temporarily or permanently, especially if they're not cloud-ready. For example, a healthcare firm retains its legacy PACS system (used for radiology imaging), due to latency and compliance requirements, while migrating surrounding services like scheduling, billing, and analytics to AWS.

- Relocate: Move large-scale workloads (e.g., VMware or Hyper-V environments) directly into AWS without refactoring. For example, an SMB with hundreds of virtual machines running internal applications uses VMware Cloud on AWS to relocate its existing virtualization stack into the cloud for faster migration and operational consistency.

Read More: AWS cloud migration strategies explained: A practical guide.

These 7 strategies are typically used together during the cloud modernization engagement. Each workload is evaluated and categorized to ensure a strategic, cost-effective, and business-aligned migration.

3. Right-size compute, storage, and database resources for cost control

SMBs often overspend on cloud when workloads are lifted without optimization. So, AWS partners right-size every component to match real-world usage and align with budget constraints.

Key tactics include:

- Amazon EC2 instance sizing based on actual utilization trends over time, not static estimations.

- Storage tiering using Amazon S3 Intelligent-Tiering, EBS volume optimization, and lifecycle policies for cold data.

- Relational database migration to Amazon RDS or Amazon Aurora, with automated backups, replication, and patching.

- Auto Scaling Groups (ASGs) to handle variable traffic without overprovisioning.

- Pricing models like Savings Plans and Spot Instances can reduce ongoing compute costs.

This ensures the environment is financially sustainable as workloads increase over time.

4. Modernize data workflows and eliminate silos

Many SMBs store customer, sales, and operations data across disconnected platforms, limiting visibility and adding manual overhead. Cloud migration offers a chance to rebuild data infrastructure for real-time insights and scale.

AWS partners introduce:

- Centralized data lakes on Amazon S3, partitioned and cataloged using AWS Glue Data Catalog.

- ETL pipelines using AWS Glue, AWS Lambda, and AWS Step Functions to automate data ingestion and transformation.

- Analytics layers via Amazon Athena, AWS Redshift, or Amazon QuickSight, replacing static reports with interactive dashboards.

- Data governance using Lake Formation and IAM roles to control who can access sensitive data.

This structure supports everything from executive reporting to compliance audits, AI workloads, and process automation.

5. Standardize deployment, security, and operational practices

Legacy environments often depend on manual scripts and ad hoc changes, increasing the risk of errors and downtime. Migration is the ideal time to implement standardization using DevOps and infrastructure-as-code (IaC).

Partners help implement:

- IaC templates with AWS CloudFormation to make infrastructure reproducible and auditable.

- CI/CD pipelines with AWS CodePipeline or AWS CodeBuild for automated deployments.

- Secrets management using AWS Secrets Manager to avoid storing sensitive data in code.

- Automated rollbacks, blue/green deployments, and health checks to reduce deployment risks.

This shift reduces downtime, accelerates time-to-market, and improves software reliability.

6. Invest in governance, monitoring, and continuous improvement

Once migrated, workloads require active governance and observability to avoid sprawl, overuse, or compliance issues. AWS Partners stay engaged post-migration to optimize the environment over time.

This includes:

- Cost governance using AWS Budgets, AWS Cost Explorer, and tagging policies for project- or team-level reporting.

- Observability through Amazon CloudWatch dashboards, AWS CloudTrail logs, and custom alarms for anomalies or failures.

- Security hygiene using AWS Security Hub, AWS GuardDuty, and vulnerability scanning tools.

- Change management with access controls, patching automation, and audit trails.

- Training sessions to upskill your internal team on managing AWS environments confidently.

In addition, AWS Partners help build roadmaps for future innovation, whether that’s rolling out Amazon SageMaker for ML, Amazon Q Business for conversational analytics, or expanding into new regions.

Each of these strategies utilized by AWS partners ensures that SMBs don’t just replicate legacy inefficiencies in the cloud.

Therefore, with the right AWS partner, businesses can build a secure, scalable, and future-ready foundation that evolves with their goals.

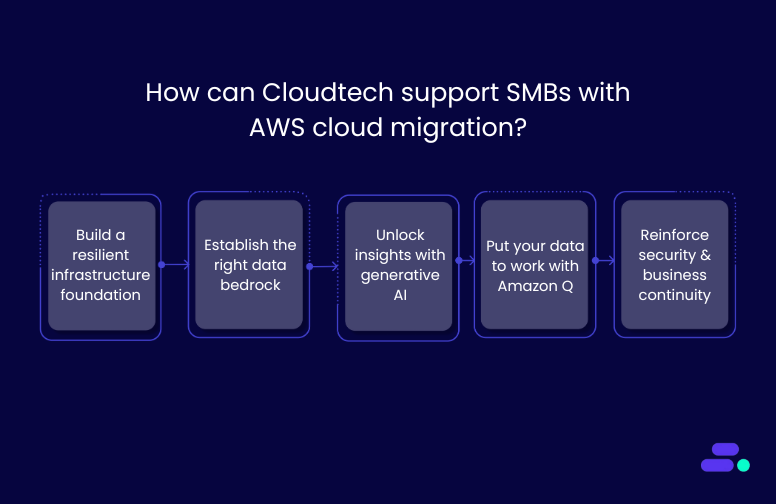

How can Cloudtech support SMBs with AWS Cloud migration?

Cloudtech is an AWS Advanced Tier Partner that has helped multiple SMBs migrate from legacy systems to secure, scalable AWS environments with minimal disruption and maximum ROI. Their approach is tailored, efficient, and aligned with real business needs.

For businesses ready for AWS Cloud migration, Cloudtech can:

- Build a resilient infrastructure foundation: Cloudtech sets up the AWS environment with scalable compute and storage, account governance via AWS Control Tower, and baseline security controls. Through expert-led configuration and knowledge transfer, it creates a strong, adaptable foundation that grows with the business.

- Establish the right data bedrock: From database migrations to ETL pipelines and AWS Data Lake architecture, Cloudtech ensures data is ready for analytics and AI. This solid foundation improves accessibility, eliminates silos, and accelerates decision-making across teams.

- Unlock insights with AI: Cloudtech uses Amazon Textract to extract structured data from physical or handwritten documents. This extracted content can then be processed using tools like Amazon Comprehend to identify key insights, classify information, or detect entities. This eliminates manual entry and unlocks real business intelligence from previously unstructured documents.

- Put your data to work with Amazon Q: Cloudtech enables SMBs to use Amazon Q Business and Amazon Q in QuickSight to automate tasks, summarize data, and generate content. The result: faster decision-making, increased productivity, and better business outcomes through AI-powered intelligence.

- Reinforce security and business continuity: Cloudtech integrates cloud-native security best practices, proactive chaos engineering, automated backups, and disaster recovery strategies to enhance security and ensure business continuity. These tools help reduce downtime, safeguard data, and keep operations running, even in unpredictable scenarios.

Cloudtech doesn’t just migrate workloads. It equips SMBs with a secure, data-ready, and AI-optimized AWS environment—built for growth, efficiency, and long-term impact.

Conclusion

Migrating to AWS is more than a technology shift. It’s a strategic move toward building a scalable, secure, and future-ready business. With the right partner, SMBs can transform aging infrastructure into a flexible cloud environment that reduces costs, improves resilience, and unlocks innovation.

For SMBs ready to modernize with confidence, Cloudtech delivers tailored AWS migration services grounded in best practices and real-world impact. Whether you're moving a few workloads or re-architecting your entire IT landscape, Cloudtech helps you go further, faster. Get started now!

FAQs

1. What are the typical starting points for SMBs considering AWS migration?

Most SMBs begin with workloads that are costly to maintain or hard to scale on-premises, such as databases, file servers, or ERP systems. Cloudtech helps prioritize which workloads to migrate first based on business impact, cost savings, and technical feasibility.

2. How long does a cloud migration project take with Cloudtech?

Timelines vary by scope, but most SMB migrations can be completed in phases over 6 to 12 weeks. Cloudtech’s phased approach ensures minimal disruption by aligning migration waves with business operations and readiness.

3. What AWS tools are used to support a smooth migration?

Cloudtech uses AWS Migration Evaluator, AWS Application Migration Service (MGN), Database Migration Service (DMS), and the AWS Well-Architected Framework to ensure secure and efficient migration with minimal downtime.

4. Can Cloudtech help with compliance during and after migration?

Cloudtech builds AWS environments that follow security and compliance best practices aligned with standards like HIPAA, SOC 2, and PCI-DSS. While it does not perform formal audits or certification processes, Cloudtech ensures the infrastructure and automation it implements are audit-ready.

5. What happens after the migration is complete?

Cloudtech provides ongoing support, training, and optimization. This includes infrastructure monitoring, cost management, DevOps enablement, and roadmaps for adopting AI, serverless, and containerized applications in the future.

As data volumes continue to grow exponentially, small and medium-sized businesses (SMBs) face multiple challenges in managing, processing, and analyzing their data efficiently.

A well-structured data lake on AWS enables businesses to consolidate structured, semi-structured, and unstructured data in one location, making it easier to extract insights and inform decisions.

According to IDC, the global datasphere is projected to reach 163 zettabytes by the end of 2025, highlighting the urgent need for scalable, cloud-first data strategies.

This blog explores how SMBs can build effective ETL (Extract, Transform, Load) processes using AWS services and modernize their data infrastructure for improved performance and insight.

Key takeaways

- Importance of ETL pipelines for SMBs: ETL pipelines are crucial for SMBs to integrate and transform data within an AWS data lake.

- AWS services powering ETL workflows: Amazon Glue, Amazon S3, Amazon Athena, and Amazon Kinesis enable scalable, secure, and cost-efficient ETL workflows.

- Best practices for security and performance: Strong security measures, access control, and performance optimization are crucial to meet compliance requirements.

- Real-world ETL applications: Examples demonstrate how AWS-powered ETL supports diverse industries and handles varying data volumes effectively.

- Cloudtech’s role in ETL pipeline development: Cloudtech helps SMBs build tailored, reliable ETL pipelines that simplify cloud modernization and unlock valuable data insights.

What is ETL?

ETL stands for extract, transform, and load. It is a process used to combine data from multiple sources into a centralized storage environment, such as an AWS data lake.

Through a set of defined business rules, ETL helps clean, organize, and format raw data to make it usable for storage, analytics, and machine learning applications.

This process enables SMBs to achieve specific business intelligence objectives, including generating reports, creating dashboards, forecasting trends, and enhancing operational efficiency.

Why is ETL important for businesses?

Businesses and mostly SMBs typically manage structured and unstructured data from a variety of sources, including:

- Customer data from payment gateways and CRM platforms

- Inventory and operations data from vendor systems

- Sensor data from IoT devices

- Marketing data from social media and surveys

- Employee data from internal HR systems

Without a consistent process in place, this data remains siloed and difficult to use. ETL helps convert these individual datasets into a structured format that supports meaningful analysis and interpretation.

By utilizing AWS services, businesses can develop scalable ETL pipelines that enhance the accessibility and actionability of their data.

The evolution of ETL from legacy systems to cloud solutions

ETL (Extract, Transform, Load) has come a long way from its origins in structured, relational databases. Initially designed to convert transactional data into relational formats for analysis, early ETL processes were rigid and resource-intensive.

1. Traditional ETL

In traditional systems, data resided in transactional databases optimized for recording activities, rather than for analysis and reporting.

ETL tools helped transform and normalize this data into interconnected tables, enabling fundamental trend analysis through SQL queries. However, these systems struggled with data duplication, limited scalability, and inflexible formats.

2. Modern ETL

Today’s ETL is built for the cloud. Modern tools support real-time ingestion, unstructured data formats, and scalable architectures like data warehouses and data lakes.

- Data warehouses store structured data in optimized formats for fast querying and reporting.

- Data lakes accept structured, semi-structured, and unstructured data, supporting a wide range of analytics, including machine learning and real-time insights.

This evolution enables businesses to process more diverse data at higher speeds and scales, all while utilizing cost-efficient cloud-native tools like those offered by AWS.

How does ETL work?

At a high level, ETL moves raw data from various sources into a structured format for analysis. It helps businesses centralize, clean, and prepare data for better decision-making.

Here’s how ETL typically flows in a modern AWS environment:

- Extract: Pulls data from multiple sources, including databases, CRMs, IoT devices, APIs, and other data sources, into a centralized environment, such as Amazon S3.

- Transform: Converts, enriches, or restructures the extracted data. This could include cleaning up missing fields, formatting timestamps, or joining data sets using AWS Glue or Apache Spark.

- Load: Places the transformed data into a destination such as Amazon Redshift, a data warehouse, or back into S3 for analytics using services like Amazon Athena.

Together, these stages power modern data lakes on AWS, letting businesses analyze data in real-time, automate reporting, or feed machine learning workflows.

What are the design principles for ETL in AWS data lakes?

Designing ETL processes for AWS data lakes involves optimizing for scalability, fault tolerance, and real-time analytics. Key principles include utilizing AWS Glue for serverless orchestration, Amazon S3 for high-volume, durable storage, and ensuring efficient data transformation through Amazon Athena and AWS Lambda. An impactful design also focuses on cost control, security, and maintaining data lineage with automated workflows and minimal manual intervention.

- Event sourcing and processing within AWS services

Use event-driven architectures with AWS tools such as Amazon Kinesis or AWS Lambda. These services enable real-time data capture and processing, which keeps data current and workflows scalable without manual intervention.

- Storing data in open file formats for compatibility

Adopt open file formats like Apache Parquet or ORC. These formats improve interoperability across AWS analytics and machine learning services while optimizing storage costs and query performance.

- Ensuring performance optimization in ETL processes

Utilize AWS services such as AWS Glue and Amazon EMR for efficient data transformation. Techniques like data partitioning and compression help reduce processing time and minimize cloud costs.

- Incorporating data governance and access control

Maintain data security and compliance by using AWS IAM (Identity and Access Management), AWS Lake Formation, and encryption. These tools provide granular access control and protect sensitive information throughout the ETL pipeline.

By following these design principles, businesses can develop ETL processes that not only meet their current analytics needs but also scale as their data volume increases.

AWS services supporting ETL processes

AWS provides a suite of services that simplify ETL workflows and help SMBs build scalable, cost-effective data lakes. Here are the key AWS services supporting ETL processes:

1. Utilizing AWS Glue data catalog and crawlers

AWS Glue data catalog organizes metadata and makes data searchable across multiple sources. Glue crawlers automatically scan data in Amazon S3, updating the catalog to keep it current without manual effort.

2. Building ETL jobs with AWS Glue

AWS Glue provides a serverless environment for creating, scheduling, and monitoring ETL jobs. It supports data transformation using Apache Spark, enabling SMBs to clean and prepare data for analytics without managing infrastructure.

3. Integrating with Amazon Athena for query processing

Amazon Athena allows businesses to run standard SQL queries directly on data stored in Amazon S3. It works seamlessly with the Glue data catalog, enabling quick, ad hoc analysis without the need for complex data movement.

4. Using Amazon S3 for data storage

Amazon Simple Storage Service (S3) serves as the central repository for raw and processed data in a data lake. It offers durable, scalable, and cost-efficient storage, supporting multiple data formats and integration with other AWS analytics services.

Together, these AWS services form a comprehensive ETL ecosystem that enables SMBs to manage and analyze their data effectively.

Steps to construct ETL pipelines in AWS

The how-to approach to ETL pipeline construction using AWS services, with Cloudtech guiding businesses at every stage of the modernization journey.

1. Mapping structured and unstructured data sources

Begin by identifying all data sources, including structured sources like CRM and ERP systems, as well as unstructured sources such as social media, IoT devices, and customer feedback. This step ensures full data visibility and sets the foundation for effective integration.

2. Creating ingestion pipelines into object storage

Use services like AWS Glue or Amazon Kinesis to ingest real-time or batch data into Amazon S3. It serves as the central storage layer in a data lake, offering the flexibility to store data in raw, transformed, or enriched formats.

3. Developing ETL pipelines for data transformation

Once ingested, use AWS Glue to build and manage ETL workflows. This step involves cleaning, enriching, and structuring data to make it ready for analytics. AWS Glue supports Spark-based transformations, enabling efficient processing without manual provisioning.

4. Implementing ELT pipelines for analytics

In some use cases, it is more effective to load raw data into Amazon Redshift or query directly from S3 using Amazon Athena.

This approach, known as ELT (extract, load, transform), allows SMBs to analyze large volumes of data quickly without heavy transformation steps upfront.

Best practices for security and access control

Security and governance are essential parts of any ETL workflow, especially for SMBs that manage sensitive or regulated data. The following best practices help SMBs stay secure, compliant, and audit-ready from day one.

1. Ensuring data security and compliance

Use AWS Key Management Service (KMS) to encrypt data at rest and in transit, and apply policies that restrict access to encryption keys. Consider enabling Amazon Macie to automatically discover and classify sensitive data, such as personally identifiable information (PII).

For regulated industries like healthcare, ensure all data handling processes align with standards such as HIPAA, HITRUST, or GDPR. AWS Config can help enforce compliance by tracking changes to configurations and alerting when policies are violated.

2. Managing user access with AWS Identity and Access Management (IAM)

Create IAM policies based on the principle of least privilege, giving users only the permissions required to perform their tasks. Use IAM roles to grant temporary access for third-party tools or workflows without compromising long-term credentials.

For added security, enable multi-factor authentication (MFA) and use AWS Organizations to apply access boundaries across business units or teams.

3. Implementing effective monitoring and logging practices

Use AWS CloudTrail to log all API activity, and integrate Amazon CloudWatch for real-time metrics and automated alerts. Pair this with AWS GuardDuty to detect unexpected behavior or potential security threats, such as data exfiltration attempts or unusual API calls.

Logging and monitoring are particularly important for businesses working with sensitive healthcare data, where early detection of irregularities can prevent compliance issues or data breaches.

4. Auditing data access and changes regularly

Set up regular audits of who accessed what data and when. AWS Lake Formation offers fine-grained access control, enabling centralized permission tracking across services.

SMBs can use these insights to identify access anomalies, revoke outdated permissions, and prepare for internal or external audits.

5. Isolating environments using VPCs and security groups

Isolate ETL components across development, staging, and production environments using Amazon Virtual Private Cloud (VPC).

Apply security groups and network ACLs to control traffic between resources. This reduces the risk of accidental data exposure and ensures production data remains protected during testing or development.

By following these practices, SMBs can build trust into their data pipelines and reduce the likelihood of security incidents.

Also Read: 10 Best practices for building a scalable and secure AWS data lake for SMBs

Understanding theory is great, but seeing ETL in action through real-world examples helps solidify these concepts.

Real-world examples of ETL implementations

Looking at how leading companies use ETL pipelines on AWS offers practical insights for small and medium-sized businesses (SMBs) building their own data lakes. The tools and architecture may scale across business sizes, but the core principles remain consistent.

Sisense: Flexible, multi-source data integration

Business intelligence company Sisense built a data lake on AWS to handle multiple data sources and analytics tools.

Using Amazon S3, AWS Glue, and Amazon Redshift, they established ETL workflows that streamlined reporting and dashboard performance, demonstrating how AWS services can support diverse, evolving data needs.

IronSource: real-time, event-driven processing

To manage rapid growth, IronSource implemented a streaming ETL model using Amazon Kinesis and AWS Lambda.

This setup enabled them to handle real-time mobile interaction data efficiently. For SMBs dealing with high-frequency or time-sensitive data, this model offers a clear path to scalability.

SimilarWeb: scalable big data processing

SimilarWeb uses Amazon EMR and Amazon S3 to process vast amounts of digital traffic data daily. Their Spark-powered ETL workflows are optimized for high-volume transformation tasks, a strategy that suits SMBs looking to modernize legacy data systems while preparing for advanced analytics.

AWS partners, such as Cloudtech, work with multiple such SMB clients to implement similar AWS-based ETL architectures, helping them build scalable and cost-effective data lakes tailored to their growth and analytics goals.

Choosing tools and technologies for ETL processes

For SMBs building or modernizing a data lake on AWS, selecting the right tools is key to building efficient and scalable ETL workflows. The choice depends on business size, data complexity, and the need for real-time or batch processing.

1. Evaluating AWS Glue for data cataloging and ETL

AWS Glue provides a serverless environment for data cataloging, cleaning, and transformation. It integrates well with Amazon S3 and Redshift, supports Spark-based ETL jobs, and includes features like Glue Studio for visual pipeline creation.

For SMBs looking to avoid infrastructure management while keeping costs predictable, AWS Glue is a reliable and scalable option.

2. Considering Amazon Kinesis for real-time data processing

Amazon Kinesis is ideal for SMBs that rely on time-sensitive data from IoT devices, applications, or user interactions. It supports real-time ingestion and processing with low latency, enabling quicker decision-making and automation.

When paired with AWS Lambda or Glue streaming jobs, it supports dynamic ETL workflows without overcomplicating the architecture.

3. Assessing Upsolver for automated data workflows

Upsolver is an AWS-native tool that simplifies ETL and ELT pipelines by automating tasks like job orchestration, schema management, and error handling.

While third-party, it operates within the AWS ecosystem and is often considered by SMBs that want faster deployment times without building custom pipelines. Cloudtech helps evaluate when tools like Upsolver fit into the broader modernization roadmap.

Choosing the right mix of AWS services ensures that ETL workflows are not only efficient but also future-ready. AWS partners like Cloudtech support SMBs in assessing tools based on their use cases, guiding them toward solutions that align with their cost, scale, and performance needs.

How Cloudtech supports SMBs with ETL on AWS

Cloudtech is an advanced cloud modernization and AWS Tier Partner focused on helping SMBs build efficient ETL pipelines and data lakes on AWS. Cloudtech helps with:

- Data modernization: Upgrading data infrastructures for improved performance and analytics, helping businesses unlock more value from their information assets through Amazon Redshift implementation.

- Application modernization: Revamping legacy applications to become cloud-native and scalable, ensuring seamless integration with modern data warehouse architectures.

- Infrastructure and resiliency: Building secure, resilient cloud infrastructures that support business continuity and reduce vulnerability to disruptions through proper Amazon Redshift deployment and optimization.

- Generative artificial intelligence: Implementing AI-driven solutions that leverage Amazon Redshift's analytical capabilities to automate and optimize business processes.

Cloudtech simplifies the path to modern ETL, enabling SMBs to gain real-time insights, meet compliance standards, and grow confidently on AWS.

Conclusion

Cloudtech helps SMBs simplify complex data workflows, making cloud-based ETL accessible, reliable, and scalable.

Building efficient ETL pipelines is crucial for SMBs to utilize a data lake on AWS fully. By adopting AWS-native tools such as AWS Glue, Amazon S3, and Amazon Athena, businesses can simplify data processing while ensuring scalability, security, and cost control. Following best practices in data ingestion, transformation, and governance helps unlock actionable insights and supports better business decisions.

Cloudtech specializes in guiding SMBs through this cloud modernization journey. With expertise in AWS and a focus on SMB requirements, Cloudtech delivers customized ETL solutions that enhance data reliability and operational efficiency.

Partners like Cloudtech help to design and implement scalable, secure ETL pipelines on AWS tailored to your business goals. Reach out today to learn how Cloudtech can help improve your data strategy.

FAQs

1. What is an ETL pipeline?

ETL stands for extract, transform, and load. It is a process that collects data from multiple sources, cleans and organizes it, then loads it into a data repository such as a data lake or data warehouse for analysis.

2. Why are ETL pipelines important for SMBs?

ETL pipelines help SMBs consolidate diverse data sources into one platform, enabling better business insights, streamlined operations, and faster decision-making without managing complex infrastructure.

3. Which AWS services are commonly used for ETL?

Key AWS services include AWS Glue for data cataloging and transformation, Amazon S3 for data storage, Amazon Athena for querying data directly from S3, and Amazon Kinesis for real-time data ingestion.

4. How does Cloudtech help with ETL implementation?

Cloudtech supports SMBs in designing, building, and optimizing ETL pipelines using AWS-native tools. They provide tailored solutions with a focus on security, compliance, and performance, especially for healthcare and regulated industries.

5. Can ETL pipelines handle real-time data processing?

Yes, AWS services like Amazon Kinesis and AWS Glue Streaming support real-time data ingestion and transformation, enabling SMBs to act on data as it is generated.Conclusion

Amazon Elastic Container Service (Amazon ECS) and Amazon Elastic Kubernetes Service (Amazon EKS) simplify how businesses run and scale containerized applications, eliminating the complexity of managing complex infrastructure. Unlike open-source options that demand significant in-house expertise, these managed AWS services automate deployment and security, making them a strong fit for teams focused on speed and growth.

The impact is evident. The global container orchestration market reached $332.7 million in 2018 and is projected to surpass $1382.1 million by 2026, driven largely by businesses adopting cloud-native architectures.

While both services help you deploy, manage, and scale containers, they differ significantly in how they operate, who they’re ideal for, and the level of control they offer.

This guide provides a detailed comparison of Amazon ECS vs EKS, highlighting the technical and operational differences that matter most to businesses ready to modernize their application delivery.

Key Takeaways

- Amazon ECS and Amazon EKS both deliver managed container orchestration, but Amazon ECS focuses on simplicity and deep AWS integration, while Amazon EKS offers portability and advanced Kubernetes features.

- Amazon ECS is a strong fit for businesses seeking rapid deployment, cost control, and minimal operational overhead, while Amazon EKS suits teams with Kubernetes expertise, complex workloads, or hybrid and multi-cloud needs.

- Pricing structures differ: Amazon ECS has no control plane fees, while Amazon EKS charges a management fee per cluster in addition to resource costs.

- Partnering with Cloudtech gives businesses expert support in evaluating, adopting, and optimizing Amazon ECS or Amazon EKS, ensuring the right service is chosen for long-term growth and reliability.

What is Amazon ECS?

Amazon ECS is a fully managed container orchestration service that helps organizations easily deploy, manage, and scale containerized applications. It integrates AWS configuration and operational best practices directly into the platform, eliminating the complexity of managing control planes or infrastructure components.

The service operates through three distinct layers that provide comprehensive container management capabilities:

- Capacity layer: The infrastructure foundation where containers execute, supporting Amazon EC2 instances, AWS Fargate serverless compute, and on-premises deployments through Amazon ECS Anywhere.

- Controller layer: The orchestration engine that deploys and manages applications running within containers, handling scheduling, availability, and resource allocation.

- Provisioning layer: The interface tools that enable interaction with the scheduler for deploying and managing applications and containers.

Key features of Amazon ECS

Amazon Elastic Container Service (ECS) is purpose-built to simplify container orchestration, without overwhelming businesses with infrastructure management.

Whether you're running microservices or batch jobs, Amazon ECS offers impactful features and tightly integrated components that make containerized applications easier to deploy, secure, and scale.

- Serverless integration with AWS Fargate: AWS Fargate is directly integrated into Amazon ECS, removing the need for server management, capacity planning, and manual container workload isolation.

Businesses define their application requirements and select AWS Fargate as the launch type, allowing AWS Fargate to automatically manage scaling and infrastructure. - Autonomous control plane operations: Amazon ECS operates as a fully managed service, with AWS configuration and operational best practices built in.

There is no need for users to manage control planes, nodes, or add-ons, which significantly reduces operational overhead and ensures enterprise-grade reliability. - Security and isolation by design: The service integrates natively with AWS security, identity, and management tools. This enables granular permissions for each container and provides strong isolation for application development. Organizations can deploy containers that meet the security and compliance standards expected from AWS infrastructure.

Key components of Amazon ECS

Amazon ECS relies on a few core components to run containers efficiently. From defining how containers run to keeping your applications available at all times, each plays an important role.

- Task definitions: JSON-formatted blueprints that specify how containers should execute, including resource requirements, networking configurations, and security settings.

- Clusters: The infrastructure foundation where applications operate, providing the computational resources necessary for container execution.

- Tasks: Individual instances of task definitions representing running applications or batch jobs.

- Services: Long-running applications that maintain desired capacity and ensure continuous availability.

Together, these features and components enable businesses to focus on building and deploying applications without being hindered by infrastructure complexity.

Amazon ECS deployment models

Amazon ECS provides businesses with the flexibility to run containers in a manner that aligns with their specific needs and resources. Here are the main deployment models that cover a range of preferences, from fully managed to self-managed environments.

- AWS Fargate Launch Type: A serverless, pay-as-you-go compute engine that enables application focus without server management. AWS Fargate automatically manages capacity needs, operating system updates, compliance requirements, and resiliency.

- Amazon EC2 Launch Type: Organizations choose instance types, manage capacity, and maintain control over the underlying infrastructure. This model suits large workloads requiring price optimization and granular infrastructure control.

- Amazon ECS Anywhere: Provides support for registering external instances, such as on-premises servers or virtual machines, to Amazon ECS clusters. This option enables consistent container management across cloud and on-premises environments.

Each deployment model supports a range of business needs, making it easier to match the service to specific use cases.

How businesses can use Amazon ECS

Amazon ECS supports a wide range of business needs, from updating legacy systems to handling advanced analytics and data processing. These use cases highlight how the service can help businesses address real-world challenges and scale with confidence.

- Application modernization: The service empowers developers to build and deploy applications with improved security features in a fast, standardized, compliant, and cost-efficient manner. Businesses can use this capability to modernize legacy applications without extensive infrastructure investments.

- Automatic web application scaling: Amazon ECS automatically scales and runs web applications across multiple Availability Zones, delivering the performance, scale, reliability, and availability of AWS infrastructure. This capability is particularly beneficial for businesses that experience variable traffic patterns.

- Batch processing support: Organizations can plan, schedule, and run batch computing workloads across AWS services, including Amazon EC2, AWS Fargate, and Amazon EC2 Spot Instances. This flexibility enables cost-effective processing of periodic workloads common in business operations.

- Machine learning model training: Amazon ECS supports training natural language processing and other artificial intelligence and machine learning models without managing infrastructure by using AWS Fargate. Businesses can use this capability to implement data-driven solutions without significant infrastructure investments.

While Amazon ECS offers a seamless way to manage containerized workloads with deep AWS integration, some businesses prefer the flexibility and portability of Kubernetes, especially when operating in hybrid or multi-cloud environments. That’s where Amazon EKS comes in.

What is Amazon EKS?

Amazon Elastic Kubernetes Service (EKS) is a managed Kubernetes service that simplifies running Kubernetes on AWS and on-premises environments. This eliminates the need for organizations to install and operate their own Kubernetes control plane.

Kubernetes serves as an open-source system for automating the deployment, scaling, and management of containerized applications, while Amazon EKS provides the managed infrastructure to support these operations.

The service automatically manages the availability and scalability of Kubernetes control plane nodes, which are responsible for scheduling containers, managing application availability, storing cluster data, and executing other critical tasks. Amazon EKS is certified Kubernetes-conformant, ensuring existing applications running on upstream Kubernetes remain compatible with Amazon EKS.

Key features of Amazon EKS

Amazon EKS combines features that enable businesses to run Kubernetes clusters with reduced manual effort and enhanced security. Here are the key capabilities that make the service practical and reliable for a range of workloads.

- Amazon EKS Auto Mode: This feature fully automates the management of the Kubernetes cluster infrastructure, including compute, storage, and networking. Auto Mode provisions infrastructure, scales resources, optimizes costs, applies patches, manages add-ons, and integrates with AWS security services with minimal user intervention.

- High availability and scalability: The managed control plane is automatically distributed across three Availability Zones for fault tolerance and automatic scaling, ensuring uptime and reliability.

- Security and compliance integration: Amazon EKS integrates with AWS Identity and Access Management, encryption, and network policies to provide fine-grained access control, compliance, and security for workloads.

- Smooth AWS service integration: Native integration with services such as Elastic Load Balancing, Amazon CloudWatch, Amazon Virtual Private Cloud, and Amazon Route 53 for networking, monitoring, and traffic management.

Key Components of Amazon EKS

To support these features, Amazon EKS includes several key components that act as its operational backbone:

- Managed control plane: The managed control plane is the core Kubernetes control plane managed by AWS. It includes the Kubernetes Application Programming Interface server, etcd database, scheduler, and controller manager, and is responsible for cluster orchestration, health monitoring, and high availability across multiple AWS Availability Zones.

- Managed node groups: Managed node groups are Amazon EC2 instances or groups of instances that run Kubernetes worker nodes. AWS manages its lifecycle, updates, and scaling, allowing organizations to focus on workloads rather than infrastructure.

- Amazon EKS add-ons: These are curated sets of Kubernetes operational software (such as CoreDNS and kube-proxy) provided and managed by AWS to extend cluster functionality and ensure smooth integration with AWS services.

- Service integrations (AWS Controllers for Kubernetes): These controllers allow Kubernetes clusters to directly manage AWS resources (such as databases, storage, and networking) from within Kubernetes, enabling cloud-native application patterns.

Together, these capabilities and components make Amazon EKS a practical choice for businesses seeking flexibility, security, and operational simplicity, whether running in the cloud or on-premises.

What deployment options are available for Amazon EKS?

Amazon EKS provides several options for businesses to run their Kubernetes workloads, each with its own unique balance of control and convenience. Here are the primary deployment options that enable organizations to align their resources and goals.

- Amazon EC2 Node Groups: Organizations choose instance types, pricing models (on-demand, spot, reserved), and node counts, providing high control with higher management responsibility.

- AWS Fargate Integration: AWS Fargate eliminates node management but costs scale linearly with pod usage, making it suitable for applications with predictable resource requirements.

- AWS Outposts: Enterprise hybrid model with custom pricing, typically not cost-efficient for small teams but ideal for organizations requiring on-premises Kubernetes capabilities.

- Amazon EKS Anywhere: No AWS charges, but organizations manage everything and lose cloud-native elasticity unless combined with autoscalers.

These deployment choices open up a range of practical use cases for businesses across different industries and technical requirements.

How can businesses use Amazon EKS?

Amazon EKS supports a variety of business needs, from building reliable applications to supporting data science teams. These use cases demonstrate how the service enables organizations to manage complex workloads and remain flexible as requirements evolve.

- High-availability applications deployment: Using Elastic Load Balancing ensures applications remain highly available across multiple Availability Zones. This capability supports mission-critical applications requiring continuous operation.

- Microservices architecture development: Organizations can utilize Kubernetes service discovery features with AWS Cloud Map or Amazon Virtual Private Cloud Lattice to build resilient systems. This approach enables scalable, maintainable application architectures.

- Machine learning workload execution: Amazon EKS supports popular machine learning frameworks such as TensorFlow, MXNet, and PyTorch. With Graphics Processing Unit support, organizations can handle complex machine learning tasks effectively.

- Hybrid and multi-cloud deployments: The service enables consistent operation on-premises and in the cloud using Amazon EKS clusters, features, and tools to run self-managed nodes on AWS Outposts or Amazon EKS Hybrid Nodes.

Comparing these Amazon services helps businesses identify where each service excels and what sets them apart. Choosing between the two depends on your team's expertise, application needs, and the level of control you want over your orchestration layer.

Key differences between Amazon ECS and Amazon EKS

Amazon ECS is a fully managed, AWS-native service that’s simpler to set up and use. On the other hand, Amazon EKS is built on Kubernetes, offering more flexibility and portability for teams already invested in the Kubernetes ecosystem.

When comparing Amazon ECS and Amazon EKS, several key differences emerge in how they handle orchestration, integration, and day-to-day management.

|

Aspect |

Amazon ECS |

Amazon EKS |

|

Orchestration Engine |

AWS-native container orchestration system |

Kubernetes-based open-source orchestration platform |

|

Setup & Operational Complexity |

Easy to set up with minimal learning curve; ideal for teams familiar with AWS |

More complex setup; requires Kubernetes knowledge and deeper configuration |

|

Learning Requirements |

Basic AWS and container knowledge |

Requires AWS + Kubernetes expertise |

|

Service Integration |

Deep integration with AWS tools (IAM, CloudWatch, VPC); better for AWS-centric workloads |

Native Kubernetes experience with AWS support; works across cloud and on-premises environments |

|

Portability |

Strong AWS lock-in; limited portability to other platforms |

Reduced vendor lock-in; supports multi-cloud and hybrid deployments |

|

Pricing – Control Plane |

No additional control plane charges |

$0.10/hour/cluster (Standard Support) or $0.60/hour/cluster (Extended Support) |

|

Pricing – General |

Pay only for AWS compute (Amazon EC2, AWS Fargate, etc.) |

Pay for compute + control plane + optional EKS-specific features |

|

EKS Auto Mode |

Not applicable |

Additional fee based on instance type + standard EC2 costs |

|

Hybrid Deployment (AWS Outposts) |

No extra Amazon ECS charge; control plane runs in the cloud |

The exact Amazon EKS control plane pricing applies to Outposts |

|

Version Support |

Not version-bound |

14 months (Standard), 26 months (Extended) for Kubernetes versions |

|

Networking |

Supports multiple modes (Task, Bridge, Host); native IAM; each AWS Fargate task gets its own ENI |

VPC-native with CNI plugin; supports IPv6; pod-level IAM requires config |

|

Security & Compliance |

Tight AWS IAM integration; strong isolation per task |

Fine-grained access control via IAM; supports network policies and encryption |

|

Monitoring & Observability |

AWS CloudWatch, Container Insights, AWS Config for auditing |

AWS CloudWatch, Amazon GuardDuty, Amazon EKS runtime protection, deeper Kubernetes telemetry |

The core differences between Amazon ECS and Amazon EKS enable businesses to make informed decisions based on their technical capabilities, resource needs, and long-term objectives. However, to choose the right fit, it's just as important to consider practical use cases.

When to choose AWS ECS or AWS EKS?

Selecting the right container service depends on your team’s expertise, workload complexity, and operational priorities. Below are common business scenarios to help you determine whether Amazon ECS or Amazon EKS is the better fit for your application needs.

Choose Amazon ECS when:

Some situations require a service that keeps things straightforward and allows teams to move quickly. These points highlight when Amazon ECS is the right match for business needs.

- Operational simplicity is the priority: Amazon ECS excels when organizations prioritize powerful simplicity and prefer an AWS-opinionated solution. The service is ideal for teams new to containers or those seeking rapid deployment without complex configuration requirements.

- Deep AWS integration is required: Organizations fully committed to the AWS ecosystem benefit from smooth integration with AWS services, including AWS Identity and Access Management, Amazon CloudWatch, and Amazon Virtual Private Cloud. This integration accelerates development and reduces operational complexity.

- Cost optimization is essential: Amazon ECS can be more cost-effective, especially for smaller workloads, as it eliminates control plane charges. Businesses benefit from pay-as-you-go pricing across multiple AWS compute options.

- Quick time-to-market is critical: Amazon ECS reduces the time required to build, deploy, or migrate containerized applications successfully. The service enables organizations to focus on application development rather than infrastructure management.

Choose Amazon EKS when:

Some businesses require more flexibility, advanced features, or the ability to run workloads across multiple environments. These points show when Amazon EKS is the better choice.

- Kubernetes expertise is available: Organizations with existing Kubernetes knowledge can use the extensive Kubernetes ecosystem and community. Amazon EKS enables the utilization of existing plugins and tooling from the Kubernetes community.

- Portability requirements are crucial: Amazon EKS offers vendor portability, preventing vendor lock-in and enabling workload operation across multiple cloud providers. Applications remain fully compatible with any standard Kubernetes environment.

- Complex workloads require advanced features: Applications requiring advanced Kubernetes features like custom resource definitions, operators, or advanced networking configurations benefit from Amazon EKS. The service supports complex microservices architectures and machine learning workloads.

- Hybrid deployments are necessary: Organizations needing consistent container operation across on-premises and cloud environments can utilize Amazon EKS. The service supports AWS Outposts and Amazon EKS Hybrid Nodes for comprehensive hybrid strategies.

Choosing between Amazon ECS and Amazon EKS can be challenging, particularly when considering the balance of cost, complexity, and future scalability. That’s where partners like Cloudtech step in.

How Cloudtech supports businesses comparing Amazon ECS vs EKS

Cloudtech is an advanced AWS partner that helps businesses evaluate their current infrastructure, technical expertise, and long-term goals to make the right choice between Amazon ECS and Amazon EKS, and support them every step of the way.

With a team of AWS-certified experts, Cloudtech offers end-to-end cloud transformation services, from crafting customized AWS adoption strategies to modernizing applications with Amazon ECS and Amazon EKS.

By partnering with Cloudtech, businesses can confidently compare Amazon ECS vs. EKS, select the right service for their needs, and receive expert assistance every step of the way, from planning to ongoing optimization.

Conclusion

Selecting between Amazon ECS and Amazon EKS comes down to the specific needs, technical skills, and growth plans of each business. Both services offer managed container orchestration, but the right fit depends on factors such as operational preferences, integration requirements, and team familiarity with container technologies.

For SMBs, this choice has a direct impact on deployment speed, ongoing management, and the ability to scale applications with confidence.

For businesses seeking to maximize their investment in AWS, collaborating with an experienced consulting partner like Cloudtech can clarify the Amazon ECS vs. EKS decision and streamline the path to modern application delivery. Get started with us!

FAQs

- Can AWS ECS and EKS run workloads on the same cluster?

No, ECS and EKS are separate orchestration platforms and do not share clusters. Each manages its own resources, so workloads must be deployed to either an ECS or EKS cluster, not both.

- How do ECS and EKS handle IAM permissions differently?

ECS uses AWS IAM roles for tasks and services, making it straightforward to assign permissions directly to containers. EKS, built on Kubernetes, integrates with IAM using Kubernetes service accounts and the AWS IAM Authenticator, which can require extra configuration for fine-grained access.

- Is there a difference in how ECS and EKS support hybrid or on-premises workloads?

ECS Anywhere and EKS Anywhere both extend AWS container management to on-premises environments, but EKS Anywhere offers a Kubernetes-native experience, while ECS Anywhere is focused on ECS APIs and workflows.

- Which service offers simpler integration with AWS Fargate for serverless containers?

Both ECS and EKS support AWS Fargate, but ECS typically offers a more direct and streamlined setup for running serverless containers, with fewer configuration steps compared to EKS.

- How do ECS and EKS differ in their support for multi-region deployments?

ECS provides multi-region support through its own APIs and service discovery, while EKS relies on Kubernetes-native tools and add-ons for cross-region communication, which may require extra setup and management.

Get started on your cloud modernization journey today!

Let Cloudtech build a modern AWS infrastructure that’s right for your business.